Bringing the model of our robot to simulation environment

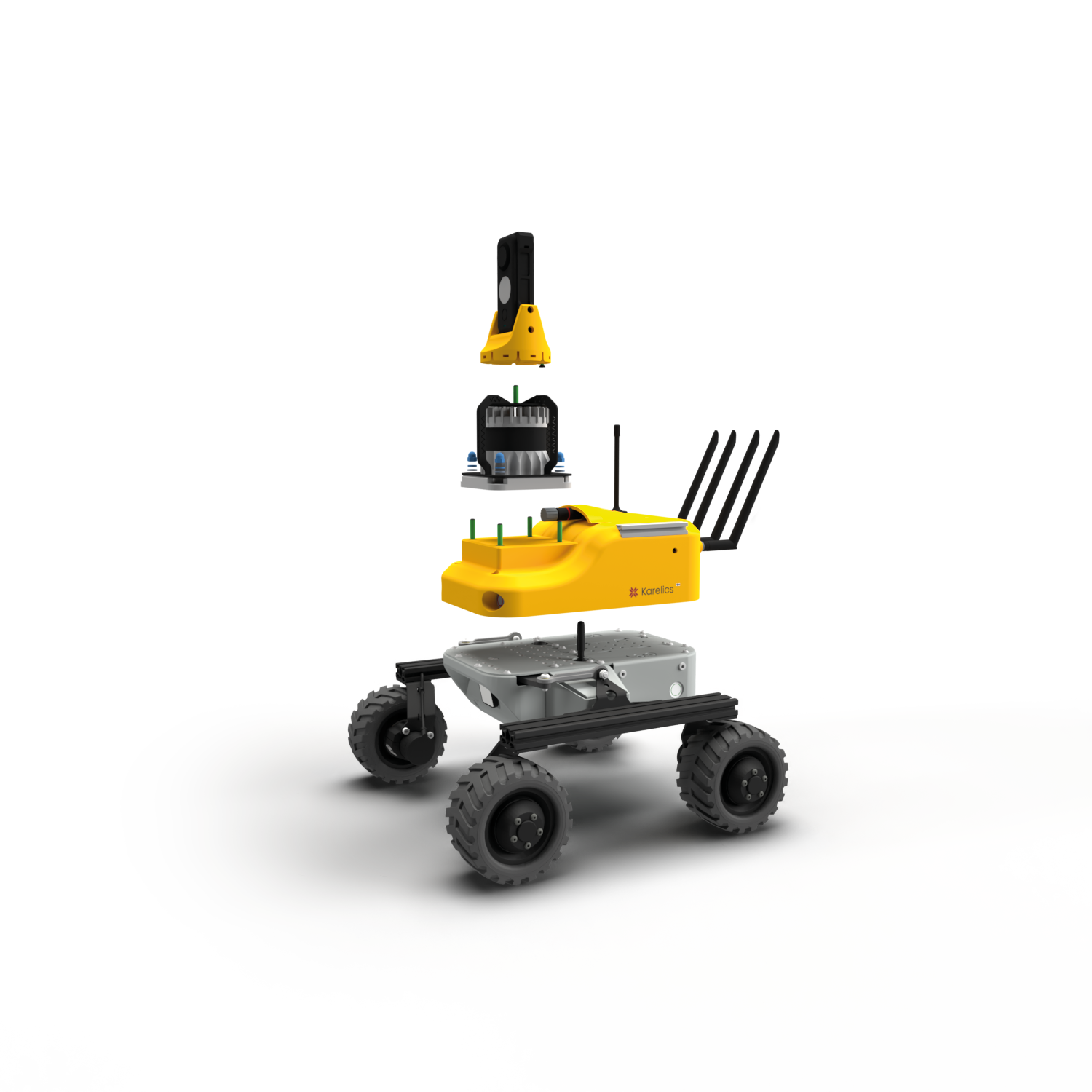

The first task for us was to bring the 3D model of our robot to simulation so that we can eventually start experimenting with self-driving in simulation. Gazebo is a suitable environment for this, so we needed to define our model of Robot in .dae format. Originally our robot model was developed with Solid Edge ST2 in .stl format, which was then converted to .dae using Blender.

For the communication we are planning to use ROS widely. First question you encounter using ROS and Gazebo is do you want to use them locally or by using cloud platforms such as ROS Development studio. While ROS development studio has already been valuable for us in the terms of tutorials, at this point we decided to go with the local installation. The second question is all about the versions to use. ROS has recently published the evolved version of the platform, ROS 2 with the latest Eloquent Elusor distribution, which is also a viable option at this point. Which one should you use? We will definitely delve into it later, but for now we settle for the most widely used ROS Melodic. So our setup is as follows:

Ubuntu 18.04

Python 3.6.9

CUDA 10.0.130 (For GPU accelerated machine learning)

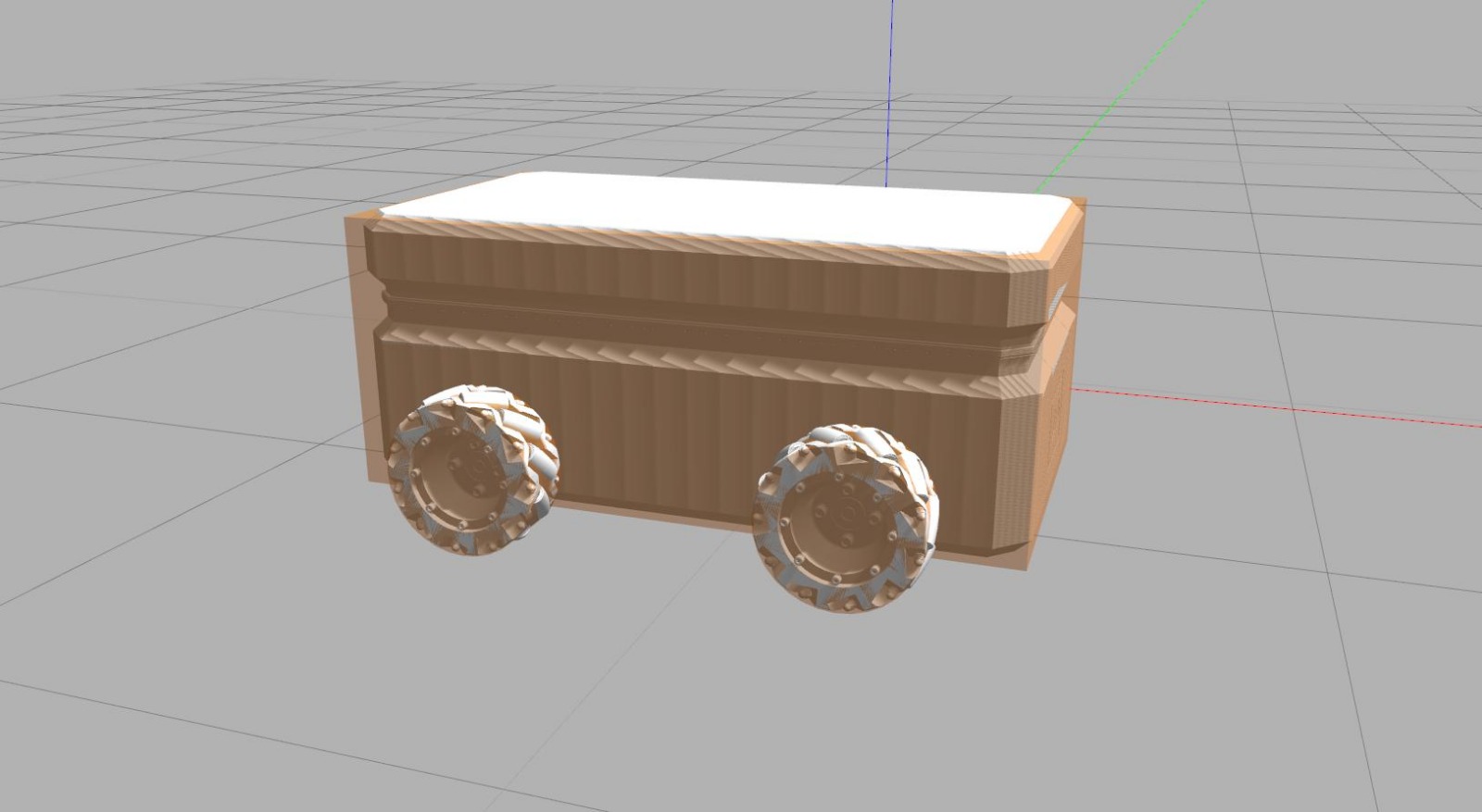

Based on this awesome tutorial about creating a virtual model of an mecanum wheeled robot, we created the first simulated prototype robot which could move. Gazebo requires two different aspects defined for the robot: visuals, which define the looks of the robot and collisions, which define actual physical collision box. Both can use the same mesh files, but if the file size is large, it can notably slow down the simulation. As our .dae -file was over 600MB in size, we needed to define our robot collisions using simple geometric shapes (see image below), while the visuals were directly imported from the .dae -file. As the file used for visuals is still huge, it made the simulation load and run notably slower and removing them completely increased the fps from ~10 to 60. We will definitely need to simplify our visual model greatly to run the simulation efficiently.

In Gazebo, the physics of the robot are defined with collisions (orange) and the textures with visuals (white).

By using ROS integration in Gazebo, we achieved to implement a simulated robot, which could be driven around using teleoperations. The mecanum wheels in the robot makes it holonomic, allowing movements by strafing which greatly enlarges the capabilities of the robot. Movements of mecanum wheels were implemented using Planar Move Plugin.

We ran a short movement and collision test with the robot in a simulated parking hall, which you can find below. The visual model and the collisions with the wall in strafing mode are still unrealistic, so these are the things that we will look into next. But overall the driving is stable and the robot already can do a lot, so we can now start experimenting also with sensors, cameras and self-driving!

Initial driving test in simulated parking hall, using ROS teleoperations to control the robot